The first line of the Wikipedia page for ‘uncanny valley’ describes it as “a hypothesized relationship between the degree of an object’s resemblance to a human being and the emotional response to such an object”. Even when they are painstakingly crafted, animated human characters in movies still don’t pass for human. But why?

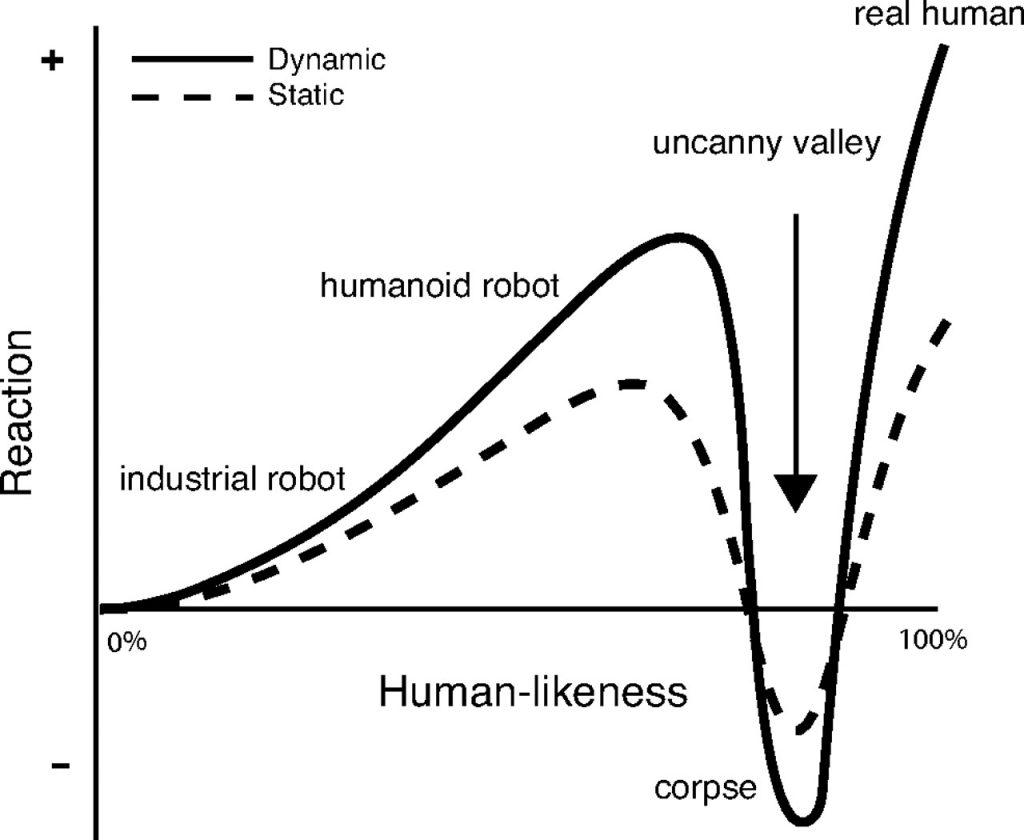

The uncanny valley is the phrase used to describe the disbelief, unease, strangeness, disgust, or creepiness we feel when confronted with synthetic humans on screen that we can’t accept as real. The graph below explains the problem perfectly and succinctly.

For many reading this, I’m teaching my grandmother to suck eggs, but for those who have perhaps not previously paid much attention to this phenomenon, first watch a real-life Alicia Vikander as the robot Ava in the trailer for Ex Machina below as a reference point.

You’re probably familiar with the Shakespeare phrase “eyes are the window to the soul”? The problem of the uncanny valley is partly to do with eye movement – both pupil dilation and contraction due to changing lighting conditions – and the movement of our eyes in their sockets. We don’t just look at something with a fixed gaze. We’re constantly and involuntarily surveying our surroundings or looking left, right, up and down even when we are focussing on something right in front of us. Think about what you are doing when you are reading a book or a broadsheet newspaper, for example. We do it all the time, all day long, and we don’t even know it.

Essentially, at a primal level, our brains are hardwired to always on the lookout for threats, but our eyes are also our primary tool of base level communication, for social interaction, conflict resolution, empathy, understanding, a need to be liked, understood, appreciated, respected …. obeyed, and so on. They are unnaturally large, so not wholly representative, but take a close look at her eyes and face in this clip from Alita: Battle Angel as an example of how far the state-of-the-art has managed to get so far. Close, but not close enough.

As well as our eyes, our facial features are also in constant, almost imperceptible motion – wrinkles, frowns, facial tics, movements based on our age, weight and physiognomy – showing randomly, and sometimes simultaneously a whole range of emotions. Real flesh and blood people are fiendishly difficult things to recreate and our brains are so acutely tuned to spotting creative imperfections, cracking this problem is something of a final frontier for movie makers. After years of Botox, Michaeel Douglas’s own face is fairly static, but the CG team seem to have taken things a stage further when they de-aged him for his role as a young Hank Pym in Ant Man. Too perfect, and that is the problem in a nutshell.

It’s nobody’s fault that the uncanny valley is yet to be fully bridged. It’s a really, really hard problem to solve, but the VFX wizards will eventually crack it. Or will they? Is it a job for that infinite number of monkeys? Can we throw Artificial Neural Networks and humungous amounts of compute power at the problem? While you think about that, here’s a deeepfaked Jim Carrey as Joker to twist your melon.

For a more in-depth, and much better explanation of the uncanny valley phenomenon, here’s an article on verywellmind.com to help you along.