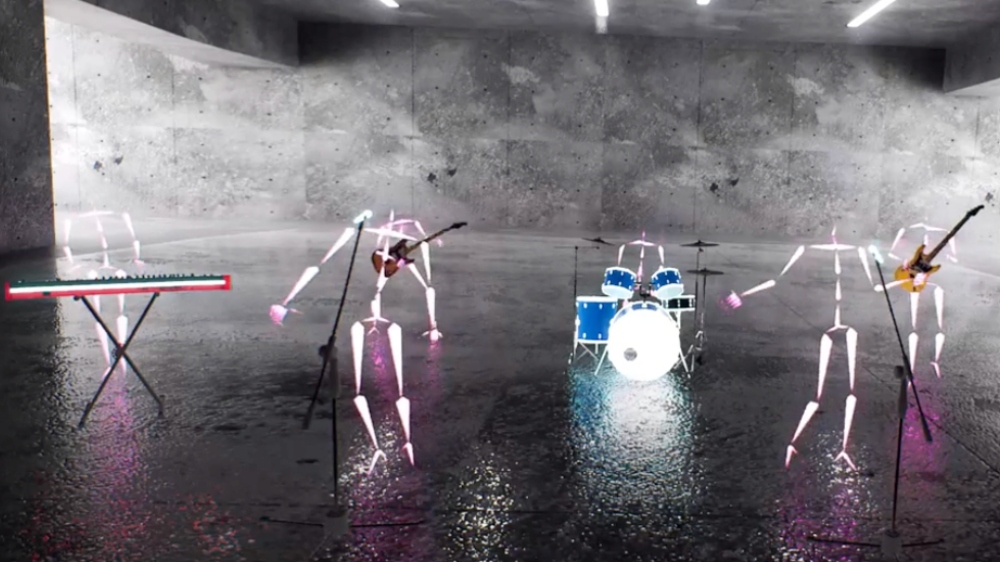

At the Dolby House at SXSW today (Weds 15 March), our friends at Draw & Code combined Dolby.io’s real-time streaming technology and Move.ai’s motion capture system to stream a technology demo of a future performance capture pipeline for the music industry.

The experiment, which was conducted in conjunction with KOKO in London’s Camden Town, involved delivering a live performance by rising UK soul star Jake Isaac as a virtual reality experience.

There’s a general feeling that there’s a disconnect between the immediacy and intimacy of watching somebody play live and experiencing the same thing virtually. This lot were out to change minds.

This exercise was designed to show how live music content can be delivered in a format that is geared towards the needs of music artists and their fans as a digital twin of the real thing rather than as a faked-up simulacrum that has to work within the restrictions of the chosen digital delivery platform.

Unlike setting up in a studio space, using a real music venue with an audience in the room is great for the artist in terms of staging, sound and atmosphere, especially if the joint is jumping. Move.ai is a markerless tracking system. No suits are required. Artists can wear their normal stage outfits, ensuring that the nuanced naturalness of a performance is captured fully.

If you would like to know more about how this type of virtualised, immersive experience can be created for you for any sort of live performance, contact John Rowe (john@mondatum.com) or Colin Birch (colin@mondatum.com).

Source: Draw & Code

Main image: Zermatt Unplugged