OpenAI describes its new tool as “an AI model that can generate realistic and imaginative scenes from text instructions”

We’re teaching AI to understand and simulate the physical world in motion, with the goal of training models that help people solve problems that require real-world interaction.

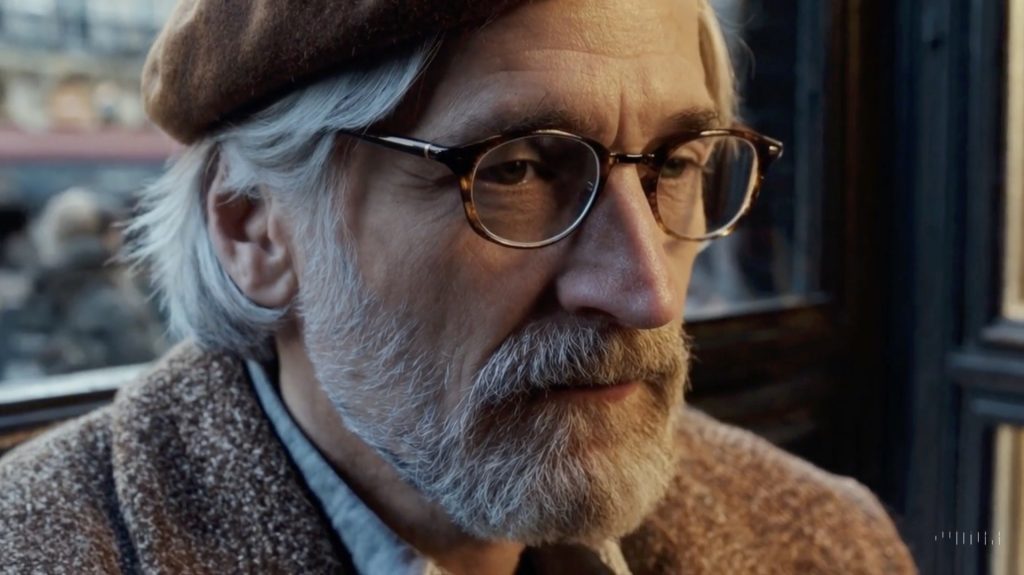

Introducing Sora, our text-to-video model. Sora can generate videos up to a minute long while maintaining visual quality and adherence to the user’s prompt.

Sora is able to generate complex scenes with multiple characters, specific types of motion, and accurate details of the subject and background. The model understands not only what the user has asked for in the prompt, but also how those things exist in the physical world.

The model has a deep understanding of language, enabling it to accurately interpret prompts and generate compelling characters that express vibrant emotions. Sora can also create multiple shots within a single generated video that accurately persist characters and visual style.

OpenAI

To create the consistent perspective seen here in the art gallery, the reflections in the subway video, or videos where the camera pans away and back and the objects stay consistent in the 3d space, the model needs to understand how to in essence simulate a physics engine to create very compelling temporal consistency (much more than everything I’ve seen up until this point).

This isn’t video generation, this is data driven multi-modal #AI world simulation that happens to output to a mov.

Gary Palmer, CEO, Electric Sheep

What are some of the really clever things it can do? (from a post by Endrit Restelica on Linkedin)

- it is capable of extending videos, either forward or backward in time, for example to produce a seamless infinite loop

- it can generate videos with dynamic camera motion – as the camera shifts and rotates, people and scene elements move consistently through three-dimensional space

- it is often, though not always, able to effectively model both short- and long-range dependencies – for example, the model can persist people, animals and objects even when they are occluded or leave the frame and it can generate multiple shots of the same character in a single sample, maintaining their appearance throughout the video

- it can sample widescreen 1920x1080p videos, vertical 1080×1920 videos and everything in between – this lets Sora create content for different devices directly at their native aspect ratios

More examples of videos generated using Sora

- Robot – https://lnkd.in/dgebrpYd

- Crab – https://lnkd.in/divtMz-d

- Amalfi – https://lnkd.in/dDQTVsRq

- Train – https://lnkd.in/dcHj6Hcy

- TVs – https://lnkd.in/daZUQqX9

- Nigeria – https://lnkd.in/d54G_xwa

- Pirate – https://lnkd.in/dvFSM-Pt

There are restrictions. For example, it can only produce original videos that are one minute long.

The current model has weaknesses. It may struggle with accurately simulating the physics of a complex scene, and may not understand specific instances of cause and effect. For example, a person might take a bite out of a cookie, but afterward, the cookie may not have a bite mark.

The model may also confuse spatial details of a prompt, for example, mixing up left and right, and may struggle with precise descriptions of events that take place over time, like following a specific camera trajectory.

OpenAI

It’s here, it’s not going anywhere and it’s only going to get stronger and better. How are you going to use it?

For advice, guidance and support relating to the safe, reliable use of generative video and other machine learning-based content origination tools in your forthcoming projects, please get in touch with Colin Birch (colin@mondatum.com) or John Rowe (john@mondatum.com) at Mondatum.