Machine learning research is an expensive and energy-hungry business. Training a machine learning model can generate carbon emissions equivalent to building and driving five cars over their lifetimes. But why?

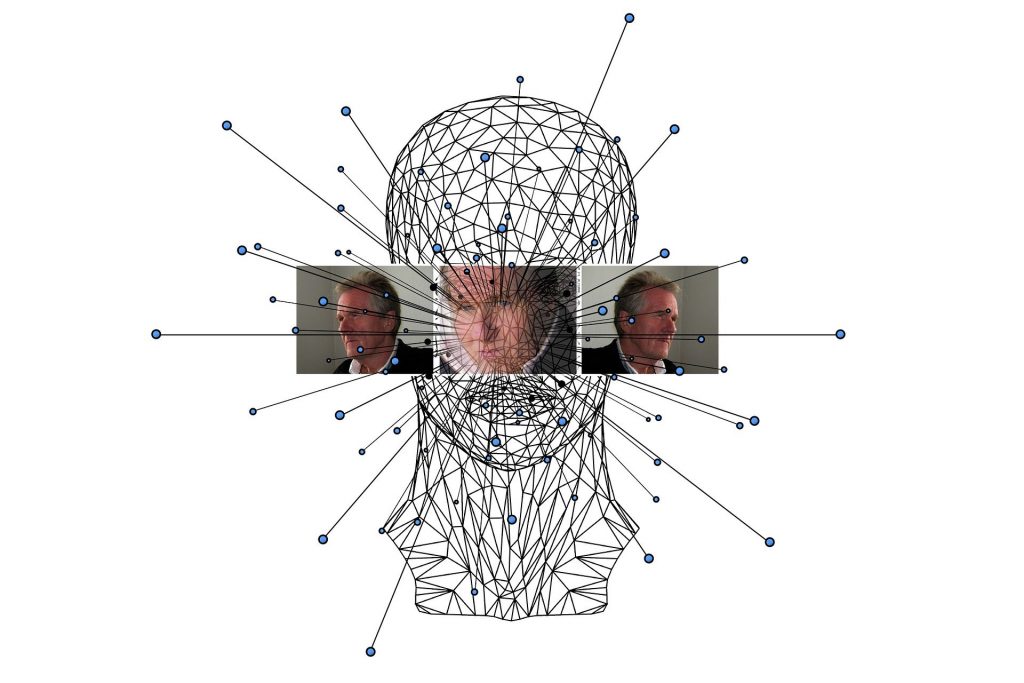

Artificial neural networks learn by adjusting weights of parameters and connections until an output matches an answer; for example, an algorithm can play a game competently or an image is correctly recognised as being that of a cat. This can take the equivalent of many years of compute time, which means you have to throw an awful lot of compute power at the problem to cut that time down to months or weeks. Plus, there’s a lot of twiddling and optimisation to be performed. How many neurons? How many connections between neurons? How fast should the parameters be changing during learning? And, this whole process might have to be repeated many thousands of times to get a satisfactory result or make a small but significant improvement.

Researchers at the University of Massachusetts Amherst estimated the energy cost of developing AI language models by measuring the power consumption of common hardware used during training. They found that training one particular model – BERT (Bidirectional Encoder Representations from Transformers) – just once has the carbon footprint of a passenger flying a round trip between New York and San Francisco. Then, by searching using different structures – training the algorithm multiple times on the data with slightly different numbers of neurons, connections and other parameters – the cost shot up to the equivalent of 315 passengers, or an entire 747 jet.

Then again, larger networks mean better accuracy. The much-lauded GPT-2, has 1.5 billion weights in its network. The even more awesome GPT-3 has 175 billion weights. Spending money upfront on training a model well can make it cheaper to run, which means the total cost and energy consumption attributed to it over its lifetime becomes lower.

GPUs are required for all this processing, as CPUs are not up to the job. While they are much more powerful, GPUs also consume more power and generate more heat, requiring more cooling, which consumes more power. You get the picture. Hooking these power-hungry servers up to sources of ‘green’ power is now an essential consideration for AI labs, even when using more efficient new server hardware and cooling technology.

If you are interested in exploring the use of machine learning and want advice on how to build and run the most cost-effective, energy-efficient model training systems, get in touch and we’d be happy to talk to you about your goals and ambitions and offer advice and guidance.

Source: The Conversation