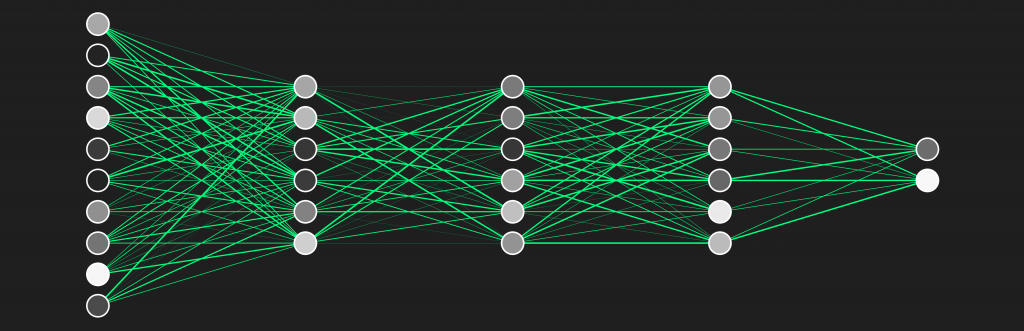

Researchers from the Department of Computer Science at the University of Copenhagen assert that it is impossible to develop AI tools that will always be stable

The main purpose of the research was to describe the problem in the language of mathematics and establish exactly how much noise must algorithms be able to withstand, and how close to the original output should the output be if we are to accept the algorithm to be stable.

Mathematically demonstrating that, beyond basic problems, nothing with AI is for certain sets the scene for improved testing protocols for algorithms, highlighting the inherent differences between machine processing and human intelligence.

For example you would not normally be distracted by a random sticker affixed to a standard road sign, but the systems in a self-driving car might not recognise as a road sign if it doesn’t match examples in its training set.

“We would like algorithms to be stable in the sense, that if the input is changed slightly the output will remain almost the same. Real life involves all kinds of noise which humans are used to ignore, while machines can get confused.

I would like to note that we have not worked directly on automated car applications. Still, this seems like a problem too complex for algorithms to always be stable.

If the algorithm only errs under a few very rare circumstances this may well be acceptable. But if it does so under a large collection of circumstances, it is bad news.”

Professor Amir Yehudayoff, University of Copenhagen

Part of the next stage is to take the theories outside the lab to see how they stand up to real-world testing .

Reference: “Replicability and Stability in Learning” by Zachary Chase, Shay Moran and Amir Yehudayoff, 2023, Foundations of Computer Science (FOCS) conference.

DOI: 10.48550/arXiv.2304.03757

Source: SciTechDaily